Product Compatibility

Refer to product compatibility to determine supported Operating Systems and Database Versions.

Download opHA here - https://opmantek.com/network-management-download/opha-downloads/

For opHA 4, see opHA 4 Release Notes.

Introduction

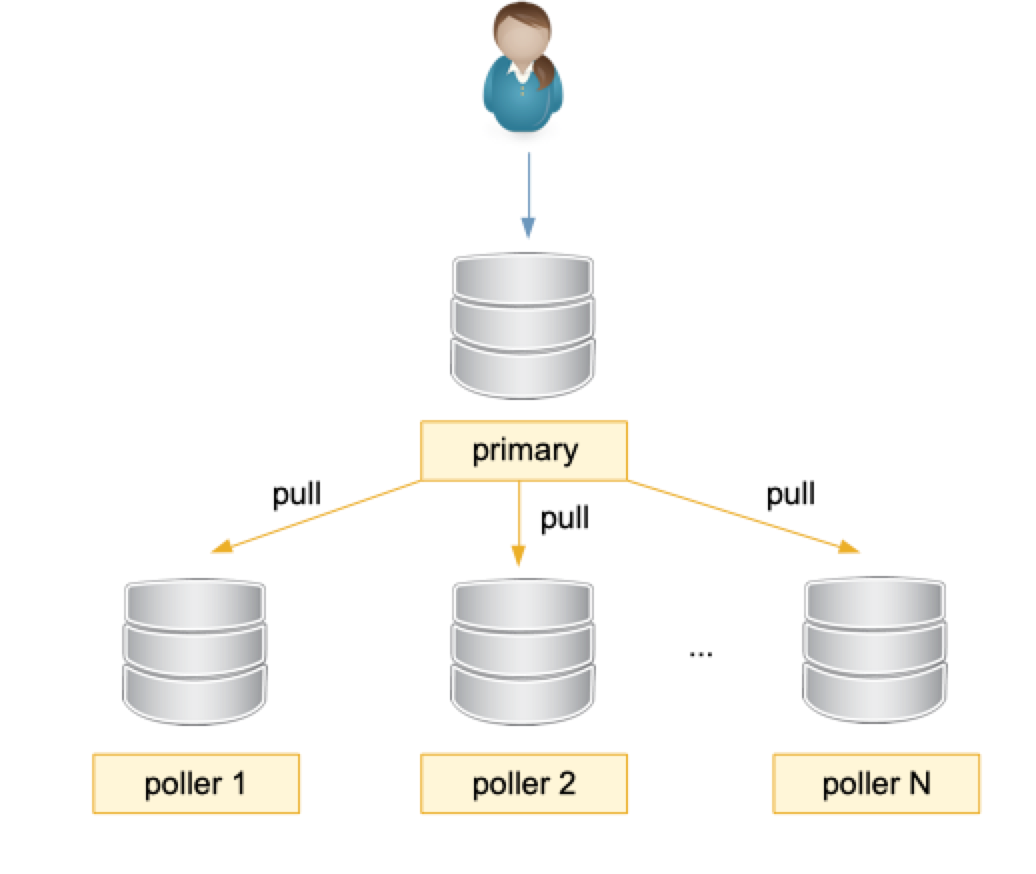

opHA introduces the concept of a Primary & multiple Poller servers:

- The Primary is the server that keeps the information of all the pollers and it is where we can read all the information from.

- The Pollers, collect their own data, and send that information to the Primary when it is requested.

The process of synchronising the information of the nodes is made by the Primary. The Primary requests the information for each poller with pull requests.

The first time the pull script runs, it requests all the data for each configured poller. Next time, it requests only the modified data since the last synchronisation.

Releases

3.6.2

RELEASED 8th March 2023

- An opHA peer will now unlock itself after 15 mins. use opha_max_lock in opCommon.json to change this timeout.

- In the peer GUI it now shows if the peer is locked and you have the option to unlock this from the GUI.

- opha_allow_insecure has been implemented across the whole application.

- Fix issue where a peer would show a stale status message when the primary role has been changed.

- Gui fixes for the peers view.

- Debug messages improved for the cli.

3.6.1

RELEASED 19th December 2022

Fixed issue where the function in the gui and cli sync-all-nodes would not use the opha_allow_insecure config option.

3.6.0

RELEASED 23 November 2022

Big release with an upgraded GUI framework to deliver accessibility enhancements and dark mode.

This release includes improved Accessibility options, including support for Dark Mode. We have taken the opportunity while we tidied up the screens to change Opmantek to FirstWave. These are new features that are backward compatible with earlier releases.

- Fixed issue where Perl would throw a warning about ../Mojo/Asset/Memory.pm

3.5.2

RELEASED 18th August 2022

Support for MongoDB 4.2 please see Upgrading to MongoDB 4.2

Changed the installer to not touch the server role if it was already been set in opCommon.json

3.5.1

RELEASED 4th August 2022

This was an internal release

3.4.3

RELEASED 14 Apr 2022

- Rediscover providing username and password, available in opha-cli. Example:

bin/opha-cli.pl act=rediscover peer=PEERNAME user=USER password=PWD debug=6

- Desynchronisation of events: Now the delete events cron job can be removed and run after the pull events when

delete_events=trueis passed as a parameter to the pull job. - Improve error handling in Nodes API.

- Centralised Distribution of Node Configuration by opHA

- New API to send configuration items

- opHA will distribute the API Key when it is a primary and encryption is set.

- ! Important note: After upgrading to opHA 3.4.3 the role will be set to Standalone, you will need to Set Role to restore the role.

3.4.2

RELEASED 15 Mar 2022

- Fixed error returning the message synchronously when doing a pull request from the GUI.

- New configuration item to opha_allow_insecure to avoid insecure connections when set to 1 - Default value is 0.

- New cli option to assign roles to peers.

3.4.1-1

RELEASED 15 Feb 2022

- Fixed error in creating conf.d directory when the NMIS property nmis_conf_ext is not set.

- Fixed Error in the manifest version.

3.4.1

RELEASED 9 Feb 2022

- Fix Error "Can't call method "clone" on an undefined value" in the pull job.

- Error handling was improved in node administration.

- Configuration files can be removed as a bulk operation, not one by one.

- Solved the issue with events desynchronisation. A new cron job will check for this desynchronised events.

3.4.0

RELEASED 1 Dec 2021

- Updated core dependencies

- Cookies now support samesite strict, see Security Configurations

3.3.3-1

RELEASED 10 Nov 2021

This release includes:

- Double check for the local nodes count.

- New configuration option, opha_max_diff_allowed. If the difference between the local node and remote node catalogs are different, more than "opha_max_diff_allowed" number, then not perform any delete.

3.3.3

RELEASED 8 Nov 2021

This release includes:

- Implemented a lock for critical operations.

- New opha-cli operations added:

act=lock_peer

act=unlock_peer

act=peer_islocked

- pull can unlock a peer after 15 minutes locked. The parameter can be changed in opha_max_lock configuration item.

- New opha-cli operations added:

- Improved opha-cli operations:

- act=data-verify To show also the duplicate nodes and the catchall data duplicates.

- Also added to the GUI.

- Improved GUI to disable buttons when an operation is in progress.

- To enable extra debugging logs:

- opCommon.json: node_update_logs: true

- nmis9, Config.nmis: audit_enabled: true

3.3.2

RELEASED 19 Oct 2021

This release includes:

- Redundancy in primary servers.

- Authorisation for users in the GUI functions. The administrator can do all read and write operations, other users can just read.

- System menu updated.

- Use a password field in Discoveries from the GUI.

- Updated expire_at fields from inventory, a wrong format was being saved.

3.3.1

RELEASED 24 Aug 2021

This release includes:

- Bug fix that was not synchronising the correct nodes in specific cluster configurations with a mirror.

- Bug fix to View effective configuration Files from the pollers (When the poller has configuration files overriding the configuration).

- Updated help links.

- Added a clean_data cli tool action to clean up the data for one peer.

3.3.0

RELEASED 10 Jun 2021

This release includes two new features:

- New System Configuration Menu: To manage nodes and configuration files.

- Redundant node polling and Centralised Node Management: With peers that works as a backup from other pollers.

Another small improvements has been made in the GUI.

3.2.1

RELEASED Released March 10, 2021.

Upgrade Notes

This release resolves issues with MongoDB connection leaks through our applications.

3.2.0

RELEASED Released Sept 30, 2020.

Upgrade Notes

The new upcoming release of opHA 3 will work on Opmantek's latest and fastest platform, however, the currently installed products are incompatible with this upgrade.

To find out more about this upgrade please read: Upgrading Opmantek Applications

3.1.2

RELEASED Released Jul 03, 2020

- Fix for configuration files that were not be read in conf.d directory (opCommon and Config).

- Removed error from logs that was not able to read conf.d.

- Now is not possible to discover a peer with same cluster_id than other poller or the Primary.

- Updated cli tool get_own_config.

- Internal minor fixes in the installer:

- support tool is updated now.

- updated function for convert json files in common-cli.

3.1.1

RELEASED Released Jun 30, 2020

- Fix for configuration files with duplicate extension. Now the GUI uses the extension as there are 2 format files: NMIS and JSON.

- Internal minor fixes in the installer.

3.1.0

RELEASED Released Jun 24, 2020

- JSON Configuration files: The .nmis configuration files will be replaced by .json files.

- New License 2.0 structure used.

- ! Important note: Due to the JSON configuration files upgrade, when updating to this version, upgrade all OMK Products installed will be required (Not NMIS) to at least X.1. version. It also requires a License update due to the new license structure.

3.0.8 BETA

Released Jun 2, 2020

- Bugfix: Discover peer window was closing when required fields missing.

- Bugfix: Update the last_update field to the synchronisation. This was causing some data not being updated in the Primary and expire_at field for events not being updated in all the documents.

- New cli cleanup functions to clean data from the cli tool.

- Added new cli function get_status to get the status for each poller in json format.

- Some minor bug fixes and internal improvements.

3.0.7 BETA

Released December 26, 2019

opHA 3.0.7 requires NMIS 9.0.6

- Added new features to the centralised configuration from the Primary with support for OMK and NMIS files:

- Added new configuration file types.

- Remove files from pollers.

- Add a button with rollback instructions.

- Improved landing page, with information of the peers. Now the peers have an status API that check the daemons and the database status.

- Send the role to the pollers: If a role is set to poller, it doesn't let the use to use the GUI functions.

- Add conf.d to support zip.

- opha new cleanup functions.

- View resulting configuration files.

3.0.5 BETA

Released August 22, 2019

opHA 3.0.5 requires NMIS 9.0.6

- Support for centralised configuration from the Primary with support for OMK and NMIS files.

3.0.4 BETA

- Support for NMIS 9 to show poller nodes from the Primary.

- A new button is added on the peers screen to edit manually the poller's url.

3.0.3 BETA

- Support for node deletion on the pollers. When a node was deleted on the poller, all this data wasn’t remove on the Primary. Now the pull process is going to remove nodes and associated data that was removed on the poller.

- Retry policy on pull failures: If there was a failure during the poller update, the pull finished. Now it is possible to specify a retry policy:

- retry number: How many times retry a request before finish the process unsuccessfully. 3 is the default value.

- delay: How many seconds is going to wait between retries (5 seconds by default).

- It is possible to modify this params in opCommon.nmis:

'opha_transfer_chunks' => {

'inventory' => 500,

'nodes' => 500,

'events' => 500,

'status' => 500,

'latest_data' => 500,

'retry_number' => 3,

'delay' => 5

},

Note that if no option is specified, there is not going to do any retry.

Also, note that if a poller is down, the process is going to take retry_number * delay seconds to finish.

- Show the registry synchronised data on the GUI.

- Small improvements on the GUI.

3.0.2-1 BETA

Hot fix to solve the problem of visualise the nodes graphics of the poller from the Primary.

3.0.2 BETA

On this version, the Primary is going to request the information by chunks. The number of calls is based on the chunk size and the number of results. The chunk size can be modified on conf/opCommon.nmis on the poller, with the next parameter (Note that a service restart is needed to use the new parameters):

|

- The configured pollers can be seen/modified on this page: http://Primary/en/omk/opHA/peers/

You can see how many calls is going to perform for each peer doing this request to that poller: http://poller/en/omk/opHA/api/v1/chunks

By default, all the data types are enabled, so all the data types are being synchronised (nodes, inventory, events, latest_data and status).

The synchronisation is configured in a cron job, that is going to run /usr/local/omk/bin/opha-cli.pl act=pull. For debugging purposes, you can run the same script with the following parameters:

|

And using force=true to bring all the data again, not only the data modified or created since the last synchronisation.

You should see something like this when the script es finished:

|